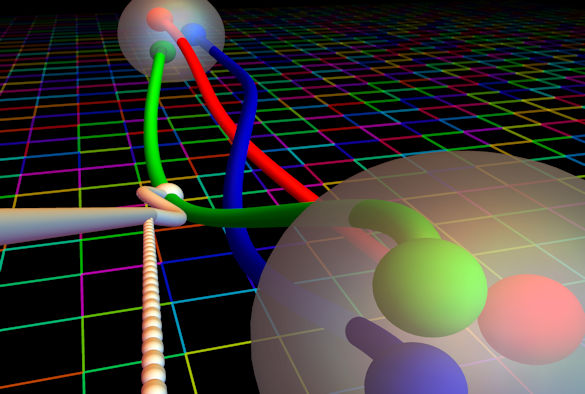

Image credit: Evan Berkowitz

Experiments that measure the lifetime of neutrons reveal a perplexing and unresolved discrepancy. While this lifetime has been measured to a precision within 1 percent using different techniques, apparent conflicts in the measurements offer the exciting possibility of learning about as-yet undiscovered physics.

Now, an international team of scientists including a particle physicist from the University of Liverpool, has enlisted powerful supercomputers to calculate a quantity known as the “nucleon axial coupling,” or gA – which is central to our understanding of a neutron’s lifetime – with an unprecedented precision. Their method offers a clear path to further improvements that may help to resolve the experimental discrepancy.

To achieve their results, the researchers created a microscopic slice of a simulated universe to provide a window into the subatomic world.

The study, led by UC Berkeley, is published today (7 June 2018) in the journal Nature.

The nucleon axial coupling is more exactly defined as the strength at which one component (known as the axial component) of the “weak current” of the Standard Model of particle physics couples to the neutron. The weak current is given by one of the four known fundamental forces of the universe and is responsible for radioactive beta decay – the process by which a neutron decays to a proton, an electron, and a neutrino.

In addition to measurements of the neutron lifetime, precise measurements of neutron beta decay are also used to probe new physics from beyond the Standard Model. Nuclear physicists seek to resolve the lifetime discrepancy and augment with experimental results by determining gA more precisely.

The researchers turned to quantum chromodynamics (QCD), a cornerstone of the Standard Model that describes how quarks and gluons interact with each other. Quarks and gluons are the fundamental building blocks for composite particles including neutrons and protons. The dynamics of these interactions determine the mass of the neutron and proton, and also the value of gA.

QCD is the accepted theory of the strong nuclear interaction, the force that “binds” the quarks together inside the protons and neutron through gluons exchange. Although this theory is in principle quite simple, because of the intensity of this interaction (hence the name “strong force”), any analytic computation turn out to be extremely difficult if not completely impossible. This is where Lattice QCD comes in.

Dr Nicolas Garron, a Research Fellow in the University’s Department of Mathematical Sciences who was part of the research team, said: “We can simulate the theory on a very powerful computer and obtain predictions where other analytic methods fail.”

“However, due to the nature of the strong iteration, Lattice QCD requires extraordinary computing resources. This is why although Lattice QCD was invented in 1974, it’s only recently that we could obtain precise and realistic prediction. “

The team’s new theoretical determination of gA is based on a simulation of a tiny piece of the universe – the size of a few neutrons in each direction. They simulated a neutron transitioning to a proton inside this tiny section of the universe, in order to predict what happens in nature.

However, another unexpected difficulty stood in the way.

André Walker-Loud, from UC Berkeley who led the new study, said: “Calculating gA was supposed to be one of the simple benchmark calculations that could be used to demonstrate that lattice QCD can be utilized for basic nuclear physics research, and for precision tests that look for new physics in nuclear physics backgrounds,” It turned out to be an exceptionally difficult quantity to determine.”

This is because lattice QCD calculations for neutrons and protons are complicated by exceptionally noisy statistical results that had thwarted major progress in reducing uncertainties in previous gA calculations. Some researchers had previously estimated that it would require the next generation of the nation’s most advanced supercomputers to achieve a 2 percent precision for gA by around 2020.

The team participating in the latest study developed a way to improve their calculations of gA using an unconventional approach and supercomputers at Oak Ridge National Laboratory and Lawrence Livermore National Laboratory

Another essential ingredient of this computation is a procedure called renormalisation. In a nutshell, this procedure converts the result given by a numerical simulation into a “physical” prediction that can be compared to experimental measurements.

The Theoretical Physics division at the University of Liverpool has considerable expertise in this area; and some techniques that are crucial for this work were invented in by Liverpool researchers.

Dr Garron added: “Numerical methods and High Performance Computing play a central role in our theoretical understanding of the fundamental laws of nature. Lattice QCD is a typical example of how theoretical physicists and computer scientists collaborate together to push the boundaries.”

This latest calculation also places tighter constraints on a branch of physics theories that stretch beyond the Standard Model – constraints that exceed those set by powerful particle collider experiments at CERN’s Large Hadron Collider.

The team was assisted by the Oak Ridge Leadership Computing Facility staff to efficiently utilize their 64 million Titan-hour allocation, and they also turned to the Multiprogrammatic and Institutional Computing program at Livermore Lab, which gave them more computing time to resolve their calculations and reduce their uncertainty margin to just under 1 percent.

Already, the team has applied for time on a next-generation supercomputer at Oak Ridge Lab called Summit, which would greatly speed up the calculations.

The research was led by UC Berkeley and included expertise from the University Liverpool, as well as researchers from University of North Carolina, RIKEN BNL Research Center at Brookhaven National Laboratory, Lawrence Livermore National Laboratory, the Jülich Research Center in Germany, the College of William & Mary, Rutgers University, the University of Washington, the University of Glasgow, NVIDIA Corp., and Thomas Jefferson National Accelerator Facility.